Today, privacy mostly relates to our ability to control how online platforms, government agencies, and corporations use, store, exchange, and modify our data.

Such organizations can affect people’s lives even without their consent.

The way our data is collected and used raises a lot of concerns among people. And, these concerns have only been exacerbated by the emergence of artificial intelligence (AI). AI can increase the data-gathering capabilities of social actors since it can collect and analyze vast quantities of information from different sources.

At the same time, the General Data Protection Regulation (GDPR) enacted by the European Union is changing the online landscape even outside of Europe. But now, with the emergence of AI, what will “the right to be forgotten” look like in the future?

How Did We Get Here?

The infusion of artificial intelligence into our daily lives and the trend of prolific data collection have both happened with minimal oversight. We haven’t given much thought about what the consequences could be. The harvested data itself has fueled the growth of AI by feeding AI algorithms.

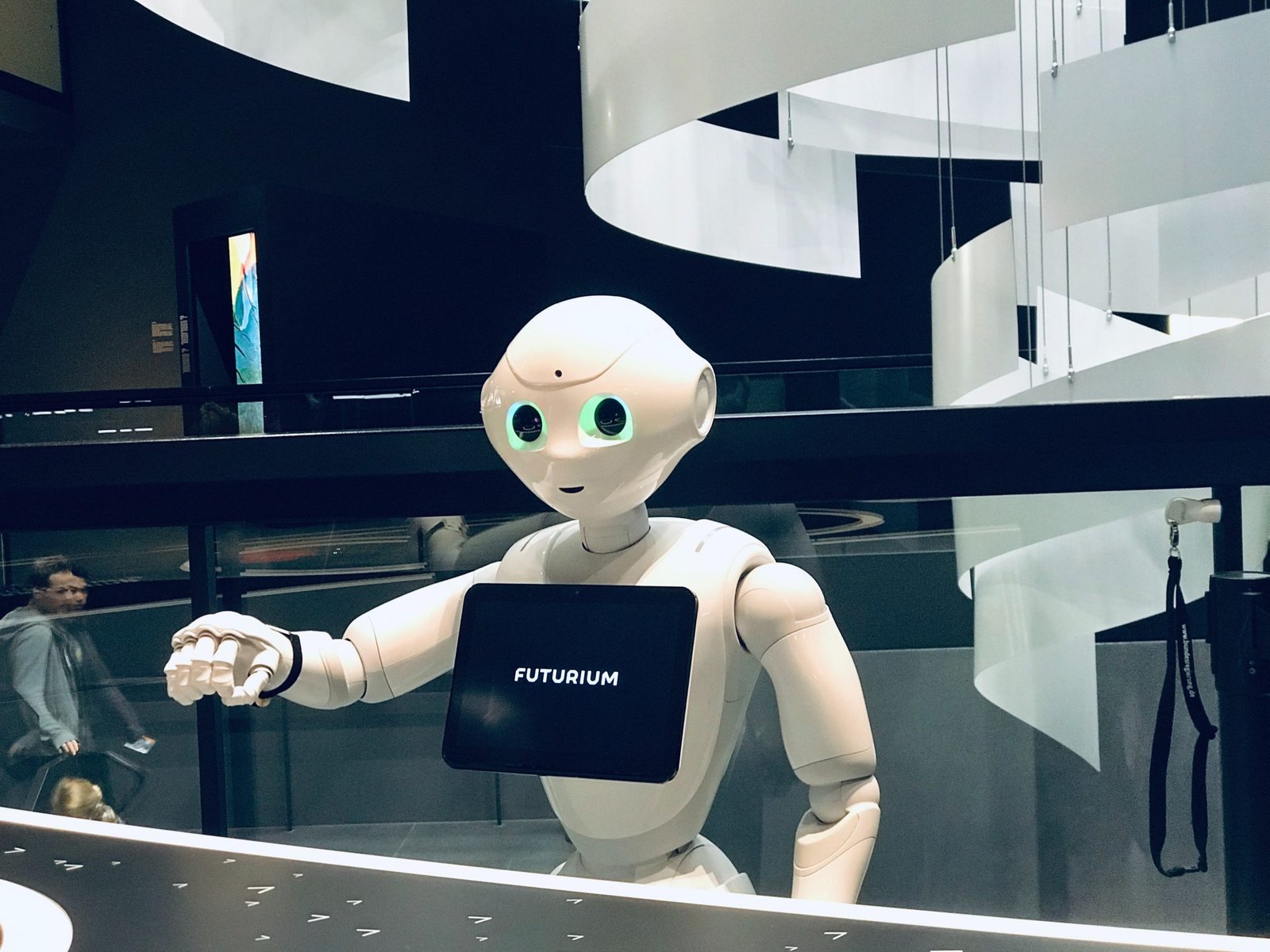

And now, computers can make decisions intuitively or experientially like humans. This has helped organizations develop conversational AI platforms that are the basis of most virtual assistants.

Thanks to virtual assistants like Siri and Cortana, many people see the goal of improving AI as creating even better and more human-like helpers that can improve their lives and experiences with tech. But, while some organizations are using AI to give their tech a human face, others are taking AI in a completely different direction. Click Here to know more AI tools .

How AI May Compromise Privacy in the Future

Scale, speed, automation —these are the key elements that have made AI attractive for use in data collection. In the future, these properties of AI will only get better. Already, AI is capable of computing much faster than human analysts.

As hardware gets better, users will be able to arbitrarily increase its power. Although disputed by some, AI is considered by most to be the fastest way of processing big data.

Moreover, AI can greatly improve analysis efficiency without supervision. All of this enables AI to affect privacy in the following ways:

Identification and Tracking

Organizations can use AI to identify, monitor, and track a single individual across multiple devices, whether the individual is at a public location, at home, or at work. Once the individual’s data becomes a part of a bigger data set, AI can leverage interferences from other devices to de-anonymize this information, no matter if the individual’s personal data is anonymized.

Present legislation has maintained the distinction between personal and nonpersonal information. The introduction of AI may blur this line.

Data Exploration

Computer apps, smart home appliances, and many other products come with features that AI could exploit. Moreover, software on such devices can collect, process, and share large quantities of data. End-users are usually unaware of this. The potential for exploitation will increase as we become even more reliant on tech.

Voice and Facial Recognition

Artificial Intelligence is becoming better and better at executing facial recognition and voice recognition. When it comes to the public sphere, both facial recognition and voice recognition can critically compromise anonymity.

For instance, law enforcement agencies may use these methods to find individuals without reasonable suspicion or probable cause. This would allow them to bypass legal procedures.

Prediction

Artificial Intelligence can predict or infer sensitive info from non-sensitive data by utilizing sophisticated machine learning algorithms. For example, AI can analyze an individual’s keyboard typing patterns and deduce states such as anxiety, sadness, confidence, and nervousness.

AI could also use location data, activity logs, and similar metrics to determine a person’s ethnic identity, political views, and sexual orientation. Moreover, AI may be able to determine someone’s overall health by using such metrics.

Profiling

As indicated, artificial Intelligence can use data as an input for the purpose of evaluating, classifying, scoring, sorting, and ranking people. Problems arise when this is done without people’s consent.

In China, something like this is already taking place. Chinese government agencies use an invisible web of Big Data and facial recognition for their social credit system. The country plans on creating a facial recognition system that could recognize people within 3 seconds.

Among other things, China uses its social credit system and information gathered through it to limit things like social, employment, credit, or housing services to some of its citizens if their behavior is considered to be inappropriate by the government.

The question is whether other countries will follow suit. In Europe, experts are urging the European Union to ban AI-designed social credit systems.

Conclusion

AI has improved many different areas of our life. It allows us to tackle many problems that previously had no solution. However, various social actors can use artificial intelligence against us. If we do not make an effort to properly understand this technology and its potential, we might face a total loss of privacy.